I am a third-year PhD student at University of Bristol working with Prof. Dima Damen. I recently completed a research visit at Max Planck Institute for Intelligent Systems, where I was advised by MPI Director Michael Black. My research interests lie in Computer Vision, Pattern Recognition, and Machine Learning. Currently, I am working on devising learning-based methods for understanding and exploring various aspects of first-person (egocentric) vision. Previously, at CVIT, IIIT Hyderabad, I worked with Prof. C.V. Jawahar and Prof. Chetan Arora on unsupervised procedure learning from egocentric videos. Earlier, I worked on improving word recognition and retrieval in large document collection with Prof. C.V. Jawahar and on 3D Computer Vision with Prof. Shanmuganathan Raman.

My ultimate goal is to contribute to the development of systems capable of understanding the world as we do. I’m an inquisitive person, and I’m always willing to learn about fields including, but not limited to, science, technology, astrophysics, and physics.

CV / Google Scholar / Github / LinkedIn / arXiv / ORCID

News

March, 2026 : Reconstructing Objects along Hand Interaction Timelines in Egocentric Video got accepted to CVPR 2026’s Workshop Sense of Space!

Oct, 2025 : Recognised as an outstanding reviewer at ICCV 2025.

Sept, 2025 : Visiting MPI-PS as a Guest Scientist working with Michael Black!

June, 2025 : Co-organising the Second Joint Egocentric Vision (EgoVis) Workshop at CVPR 2025 in Nashville.

Feb, 2025 : HD-EPIC: A Highly-Detailed Egocentric Video Dataset got accepted to CVPR 2025!

Feb, 2025 : Introducing HD-EPIC, A Highly-Detailed Egocentric Video Dataset featuring ground-truth covering recipe steps, fine-grained actions, ingredients with nutritional values, moving objects, and audio annotations all grounded in 3D!

Oct, 2024 : Our Survey paper “An Outlook into the Future of Egocentric Vision” appeared in Vol 132 at IJCV in its final format.

See all news

Machine Learning and Computer Vision (MaVi) @ University of Bristol

Publications

A constrained optimisation framework that reconstructs object poses in egocentric video by modelling temporal constraints, from static to stably grasped.

Zhifan Zhu, Siddhant Bansal, Shashank Tripathi, Dima Damen

Conference on Computer Vision and Pattern Recognition’s SaS Workshop (CVPR-W), 2026

Paper (ArXiv) / Project Page / Code / Qualitative Results Video

A validation dataset of kitchen-based egocentric videos with detailed, interconnected annotations on recipe steps, actions, ingredients, objects, audio, and 3D-grounded scene elements via digital twinning and gaze. Annotations include nutritional values, object locations, and fixture details.

Toby Perrett, Ahmad Darkhalil, Saptarshi Sinha, Omar Emara, Sam Pollard, Kranti Parida, Kaiting Liu, Prajwal Gatti, Siddhant Bansal, Kevin Flanagan, Jacob Chalk, Zhifan Zhu, Rhodri Guerrier, Fahd Abdelazim, Bin Zhu

Conference on Computer Vision and Pattern Recognition (CVPR), 2025

Paper (ArXiv) / Project Page / Download HD-EPIC / Explore Samples / Video (YouTube)

We propose the HOI-Ref task to understand hand-object interaction using Vision Language Models (VLMs). We introduce the HOI-QA dataset with 3.9M question-answer pairs for training and evaluating VLMs. Finally, we train the first VLM for HOI-Ref, achieving state-of-the-art performance.

Siddhant Bansal, Michael Wray, Dima Damen

Ego-Exo4D is a diverse, large-scale multi-modal, multi-view, video dataset and benchmark collected across 13 cities worldwide by 839 camera wearers, capturing 1422 hours of video of skilled human activities.

We present three synchronized natural language datasets paired with videos. (1) expert commentary, (2) participant-provided narrate-and-act, and (3) one-sentence atomic action descriptions.

Finally, our camera configuration features Aria glasses for ego capture which is time-synchronized with 4-5 (stationary) GoPros as the exo capture devices.

Kristen Grauman, Andrew Westbury, Lorenzo Torresani, Kris Kitani, Jitendra Malik, Triantafyllos Afouras, Kumar Ashutosh, Vijay Baiyya, Siddhant Bansal, Bikram Boote, Eugene Byrne, Zach Chavis, Joya Chen, Feng Cheng, Fu-Jen Chu, Sean Crane, Avijit Dasgupta, Jing Dong, Maria Escobar, Cristhian Forigua, Abrham Gebreselasie, Sanjay Haresh, Jing Huang, Md Mohaiminul Islam, Suyog Jain, Rawal Khirodkar, Devansh Kukreja, Kevin J Liang, Jia-Wei Liu, Sagnik Majumder, Yongsen Mao, Miguel Martin, Effrosyni Mavroudi, Tushar Nagarajan, Francesco Ragusa, Santhosh Kumar Ramakrishnan, Luigi Seminara, Arjun Somayazulu, Yale Song, Shan Su, Zihui Xue, Edward Zhang, Jinxu Zhang, Angela Castillo, Changan Chen, Xinzhu Fu, Ryosuke Furuta, Cristina Gonzalez, Prince Gupta, Jiabo Hu, Yifei Huang, Yiming Huang, Weslie Khoo, Anush Kumar, Robert Kuo, Sach Lakhavani, Miao Liu, Mi Luo, Zhengyi Luo, Brighid Meredith, Austin Miller, Oluwatumininu Oguntola, Xiaqing Pan, Penny Peng, Shraman Pramanick, Merey Ramazanova, Fiona Ryan, Wei Shan, Kiran Somasundaram, Chenan Song, Audrey Southerland, Masatoshi Tateno, Huiyu Wang, Yuchen Wang, Takuma Yagi, Mingfei Yan, Xitong Yang, Zecheng Yu, Shengxin Cindy Zha, Chen Zhao, Ziwei Zhao, Zhifan Zhu, Jeff Zhuo, Pablo Arbelaez, Gedas Bertasius, David Crandall, Dima Damen, Jakob Engel, Giovanni Maria Farinella, Antonino Furnari, Bernard Ghanem, Judy Hoffman, C. V. Jawahar, Richard Newcombe, Hyun Soo Park, James M. Rehg, Yoichi Sato, Manolis Savva, Jianbo Shi, Mike Zheng Shou, Michael Wray

Conference on Computer Vision and Pattern Recognition (CVPR), 2024

Paper / Project Page / Video / Meta blog post

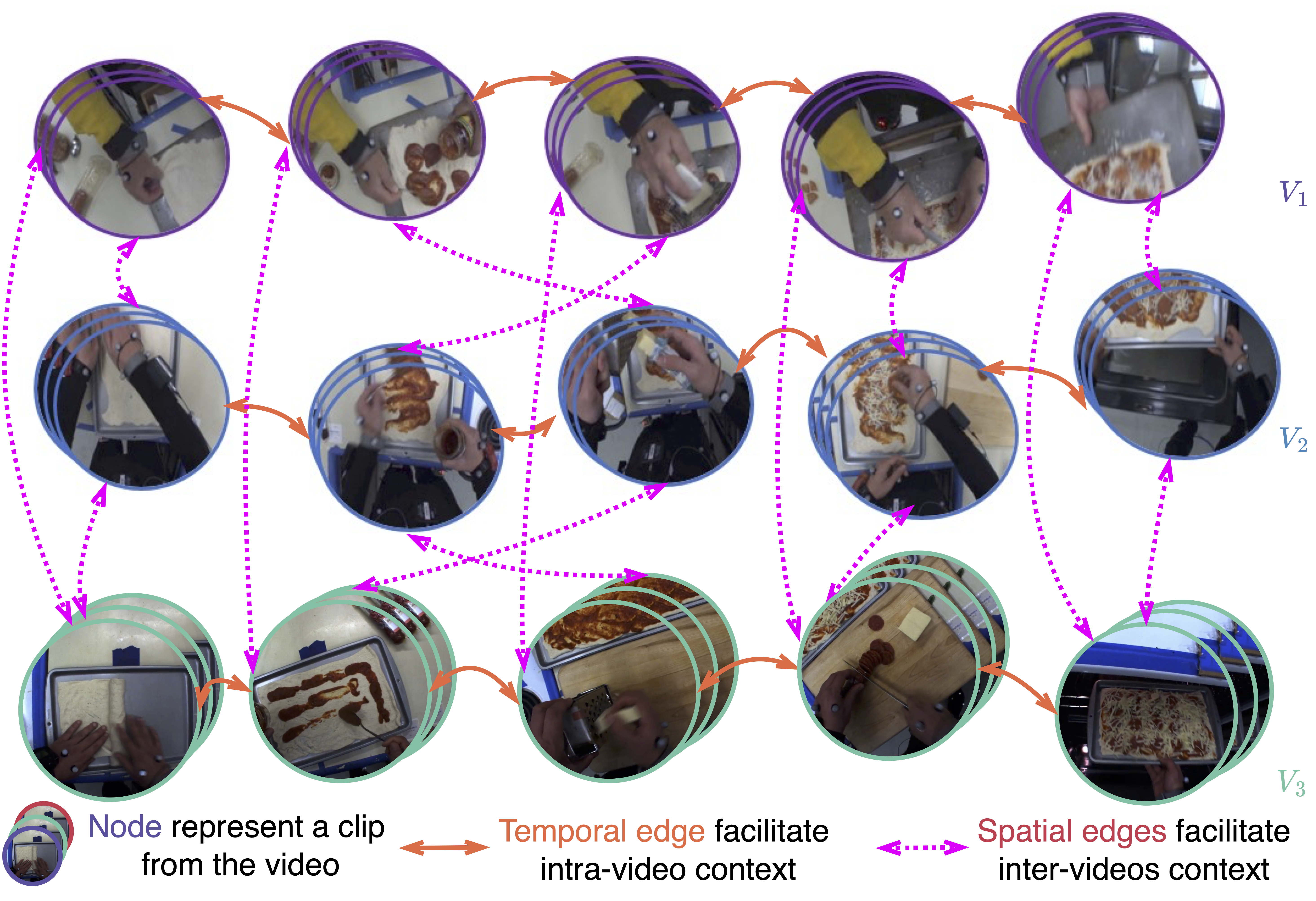

We propose Graph-based Procedure Learning (GPL) framework for procedure learning. GPL creates novel UnityGraph that represents all the task videos as a graph to encode both intra-video and inter-videos context. We achieve an improvement of 2% on third-person datasets and 3.6% on EgoProceL.

Siddhant Bansal, Chetan Arora, C.V. Jawahar

Winter Conference on Applications of Computer Vision (WACV), 2024

Paper / Download the EgoProceL Dataset / Project Page / Video

See all publications

Miscellaneous

Invited Talks

-

Egocentric Videos for Procedure Learning @ Indian Conference on Computer Vision, Graphics and Image Processing (ICVGIP 2022) (Vision India). [slides; tweet; linkedin]

-

Egocentric Videos for Procedure Learning @ IPLAB, University of Catania [slides; tweet]

-

Egocentric Videos for Procedure Learning @ Computer Vision Centre, Universitat Autònoma de Barcelona [slides; tweet]

Workshop Organizer

- Third Joint Egocentric Vision (EgoVis) Workshop @ CVPR 2026

- Second Joint Egocentric Vision (EgoVis) Workshop @ CVPR 2025

- First Joint Egocentric Vision (EgoVis) Workshop @ CVPR 2024

- Joint International 3rd Ego4D and 11th EPIC Workshop @ CVPR 2023

- 2nd International Ego4D Workshop @ ECCV 2022

Conference Reviewer

- CVPR 2022, 2023, 2024, 2026

- ICCV 2023, 2025 (outstanding reviewer)

- ECCV 2022, 2024, 2026

- WACV 2023, 2024

- ICRA 2025

Journal Reviewer

- IEEE Transactions on Pattern Analysis and Machine Intelligence (T-PAMI)

- International Journal of Computer Vision (IJCV)

- Computer Vision and Image Understanding (CVIU)

Workshop Reviewer

Thesis